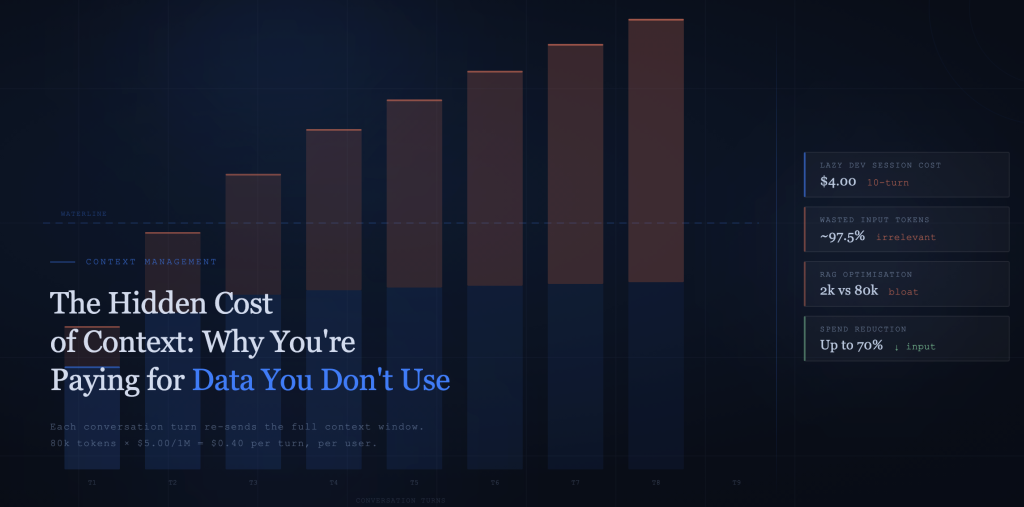

The Hidden Cost of Context: Why You’re Paying Too Much for Data You Don’t Use

In the current landscape of Large Language Model (LLM) development, we are witnessing a frenetic “feature race” centered on one specific metric: context window size. A year ago, 32k tokens felt revolutionary. Today, providers like OpenAI, Google, and Anthropic are normalizing windows of 128k, 200k, and even 1 million tokens. For AI engineers and architects, […]

The Hidden Cost of Context: Why You’re Paying Too Much for Data You Don’t Use Read More »