Imagine you’re running a shipping company. You’ve got the trucks. You’ve got the warehouses. You’ve got a fleet management system that tracks fuel consumption down to the liter.

But every time a customer wants to ship a package, they have to walk into the warehouse, find a truck driver, negotiate which vehicle to use, and manually check the fuel costs themselves.

That’s how most enterprises run AI today.

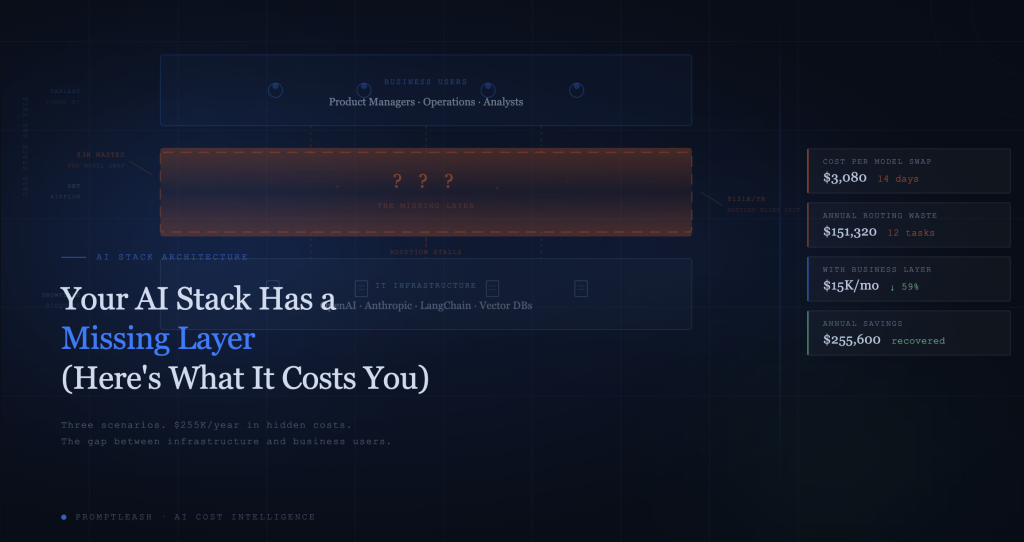

The infrastructure layer is impressive. LLM providers, vector databases, agent frameworks, RAG pipelines, engineering teams have built serious machinery. But between all that infrastructure and the business users who actually need AI to do their jobs, there’s a gap. A big, expensive, invisible gap.

We call it the missing middle layer. And it’s costing you more than you think.

The Three-Layer Problem

Every mature technology stack has three layers. Think about how your company runs data today:

| Layer | Data Stack | AI Stack (Today) |

| Business Users | Tableau, Power BI, Looker | ??? |

| Middleware | dbt, Airflow, Fivetran | ??? |

| Infrastructure | Snowflake, BigQuery, Postgres | OpenAI, Anthropic, LangChain |

See the problem? The data world figured this out years ago. Nobody asks a product manager to write SQL queries against a raw Snowflake instance. There’s always a layer, a business layer, that translates infrastructure capability into something humans can actually use.

AI hasn’t got there yet. Most companies have a perfectly functional infrastructure layer at the bottom, LLM providers, embedding models, vector stores, agent frameworks and business users at the top who need AI to classify tickets, summarise documents, automate workflows, and generate reports.

Between those two layers: nothing. A void filled with Slack messages to the engineering team and a six-week backlog.

What the Missing Layer Actually Costs You

Let’s make this concrete. Here are three scenarios we see in almost every enterprise we talk to. The numbers are composites, but they’re conservative.

Scenario 1: The PM Who Waited Two Weeks

A product manager at a Series B SaaS company wants to swap their customer feedback classifier from Claude Opus to Haiku. The task is simple, classification doesn’t need frontier-model intelligence. But the PM can’t make the change themselves. It’s an API call buried in a Python microservice.

So they file a ticket. It sits in the sprint backlog for 9 days. An engineer picks it up, tests it, deploys it. Total elapsed time: 14 days.

| Cost Component | Amount |

| 14 days of overspend on Opus vs Haiku | $1,680 (based on 60K calls/day) |

| Engineer time (4 hours @ $150/hr) | $600 |

| PM productivity lost (waiting, context-switching) | ~$800 (conservative) |

| Total cost of one model swap | $3,080 |

For one model swap. Now multiply that by every PM, every ops lead, every analyst who has an idea for how AI could improve their workflow but can’t action it without engineering.

Scenario 2: The $8,400/Month Blind Spot

An operations team at a mid-market company runs 12 AI-powered workflows: ticket classification, document summarisation, email drafting, data extraction, sentiment analysis, and more. All 12 are routed to GPT-4o.

Nobody chose GPT-4o deliberately. It was the model the first engineer set up, and it propagated. There’s no cost-per-task visibility, no model comparison, and no one has the time or access to benchmark alternatives.

| Task Category | Current (GPT-4o) | Optimal Routing |

| Classification (4 tasks) | $6,200/mo | $380/mo (Haiku) |

| Summarisation (3 tasks) | $4,800/mo | $1,440/mo (Sonnet) |

| Complex reasoning (2 tasks) | $5,100/mo | $5,100/mo (keep GPT-4o) |

| Extraction & drafting (3 tasks) | $4,900/mo | $1,470/mo (Sonnet) |

| Total | $21,000/mo | $8,390/mo |

Monthly waste: $12,610. Annual waste: $151,320.

Quality impact? Negligible. We’ve written about this in detail, classification and extraction tasks don’t need frontier models. But without a business layer that surfaces this data and makes routing actionable, nobody even knows the waste exists.

Scenario 3: The Analyst Who Gave Up

A data analyst at a healthcare company wants to build an AI workflow that summarises clinical trial reports and flags key findings. She’s got the domain expertise. She knows what “good” looks like. She could design the workflow in an afternoon.

But she can’t. There’s no self-serve interface. Building the workflow requires API keys, Python, and deployment infrastructure. She’d need to get approval from IT, wait for engineering capacity, and then sit through three rounds of requirements gathering where she explains the same thing she could have just built herself.

So she doesn’t. She goes back to reading reports manually. The AI investment that was supposed to transform her workflow sits unused.

| Hidden Cost | Impact |

| Analyst time on manual work | ~15 hrs/week = $45K/year in labor |

| Delayed insights reaching decision-makers | Unquantifiable but real |

| AI adoption stalls across the org | Other teams see analyst’s experience, don’t bother trying |

| ROI of AI infrastructure investment | Fraction of potential |

This is the cost nobody puts on a spreadsheet. It’s not overspend, it’s underspend. The AI capability exists. The business need exists. But there’s no layer connecting them.

The Four Capabilities of a Business Layer

So what does a proper business layer actually do? Based on the patterns we see across dozens of companies, it comes down to four capabilities:

| Capability | What It Does | Without It |

| Smart Routing | Auto-selects the optimal model for each task based on complexity, cost, and quality thresholds | Engineers manually pick models. Nobody revisits the choice. Costs compound. |

| Cost Control | Budget guardrails, spend alerts, circuit breakers for runaway agents | The $10,000 weekend. No alerts until the invoice. |

| Analytics | Cost-per-task visibility, model utilisation, savings opportunities surfaced automatically | Single-provider dashboards. No cross-provider view. No task-level granularity. |

| No-Code Access | Business users build and modify AI workflows without writing code or filing tickets | Every change requires engineering. Adoption bottlenecks. Business users give up. |

If this reminds you of what Salesforce did for CRM, or what Stripe did for payments, that’s not a coincidence. Every infrastructure category eventually grows a business layer. The infrastructure is necessary but not sufficient. The value only materialises when the people who understand the business problems can actually use the tools.

The Maths: What a Business Layer Saves at Scale

Let’s model a typical mid-market company with 50+ AI agents in production and $20K/month in LLM spend.

| Cost Category | Without Business Layer | With Business Layer |

| Direct LLM spend | $20,000/mo | $12,000/mo |

| Eng time on model ops (swaps, debugging, routing) | $8,000/mo (est. 0.5 FTE) | $2,000/mo |

| Runaway agent incidents (avg 1/quarter) | $3,300/mo (amortised) | $0 (circuit breakers) |

| Lost business user productivity | $5,000/mo (conservative) | $1,000/mo |

| Total monthly cost of AI operations | $36,300/mo | $15,000/mo |

| Annual savings | $255,600 |

The direct LLM savings alone, the 40% we’ve written about before, justify the investment. But the real multiplier is the indirect savings: engineering time recovered, incidents prevented, business users unblocked.

Why Now?

Three things changed in the last 12 months that make the business layer not just useful, but urgent:

1. Model proliferation. A year ago, most teams used one provider. Now the average enterprise AI team juggles 3–4 providers and 6+ models. Manual model selection doesn’t scale past two options.

2. Agentic AI. Agents don’t just answer questions, they loop, retry, call tools, and spawn sub-agents. Without guardrails, they’re a $10,000 weekend waiting to happen. The business layer provides circuit breakers that agent frameworks don’t.

3. AI spend crossed the visibility threshold. Below $5K/month, nobody cares. Above that, CFOs start asking questions. Above $20K, it’s a line item that needs governance. The companies that scale AI successfully are the ones who build cost visibility before the CFO asks for it.

The Gap Won’t Close Itself

Your AI infrastructure is probably fine. Your LLM providers are capable. Your engineering team has built impressive things.

The problem isn’t what you’ve built, it’s what sits between what you’ve built and the people who need to use it. That middle layer is where cost gets controlled, where adoption actually happens, and where the ROI of your AI investment gets realised.

Every dollar wasted on overqualified models is a dollar not shipping product. Every business user blocked by an engineering backlog is a use case that doesn’t get built. Every runaway agent without a circuit breaker is a invoice shock that erodes trust in AI.

The missing layer isn’t a nice-to-have. It’s the difference between an AI experiment and an AI-powered company.

PromptLeash is the business layer for your AI stack. Smart routing that cuts spend 40%. Cost analytics that surface waste automatically. Guardrails that prevent runaway agents. And a path to self-serve AI for the business users who actually know what needs building.

We’re in early access with AI-native companies now. Join the waitlist at promptleash.ai and stop paying the hidden tax on your missing middle layer.