Imagine hiring a Nobel Prize-winning mathematician to sit in a restaurant and calculate a 15% tip on a $40 lunch bill. They would get the answer right, certainly. But you would be paying hundreds of dollars an hour for a task that a $5 pocket calculator could do instantly for free.

This sounds absurd, yet it is exactly what 90% of enterprise engineering teams are doing right now.

We are suffering from an epidemic of “Model Laziness.” When building AI features, the path of least resistance is to connect to a single, flagship API endpoint (like GPT-5.2 or Claude 4.5 Sonnet) and use it for absolutely everything. It “just works,” so nobody questions the bill, until it scales.

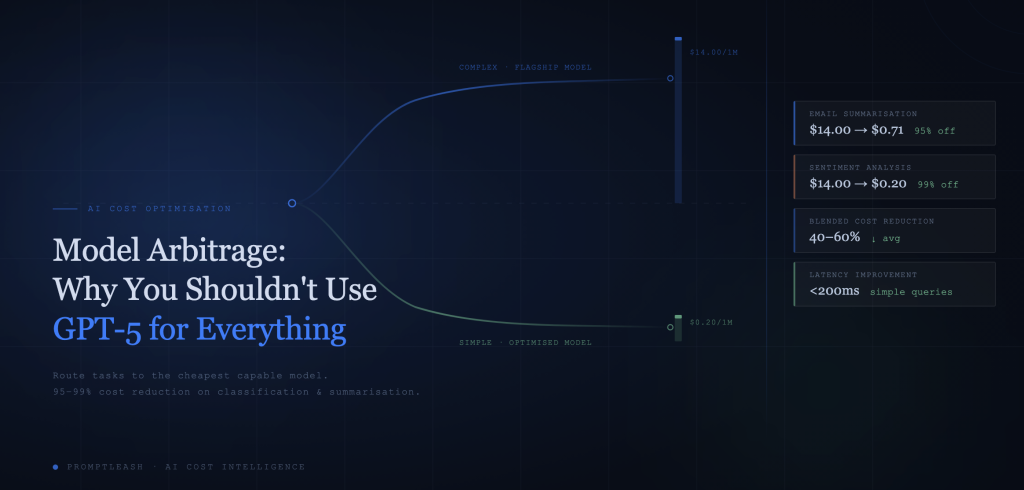

True AI optimisation isn’t just about negotiating volume discounts with OpenAI. It is about Model Arbitrage: the strategic practice of routing tasks to the cheapest, fastest model that is capable of performing the specific job at hand.

The Economics of “Good Enough”

In financial markets, arbitrage exploits price differences for the same asset. In AI Engineering, “Model Arbitrage” exploits price differences for the same functional output.

If a user types “Hello” into your chatbot, the functional output required is a polite greeting.

- GPT-5.2 will generate that greeting for $5.00 / 1M tokens.

- Llama-3-8B will generate that same greeting for $0.71 / 1M tokens.

The output is identical. The user experience is identical. But one costs 100x more than the other.

Furthermore, cost isn’t the only variable. “Dumber” models are significantly faster. While a reasoning model “thinks” about a simple request, a smaller model has already returned the answer. By relying exclusively on flagship models, you aren’t just burning cash; you are actively introducing unnecessary latency into your application.

The Data: The Massive Cost Disparity of Identical Tasks

Let’s look at the numbers. When we break down common enterprise AI tasks, the waste becomes undeniable.

| Task Type | “Overkill” Model (e.g., GPT5.2) | “Optimised” Model (e.g., Llama 3, Haiku) | Cost Reduction Factor |

| Email Summarisation | ~$14.00 / 1M Tokens | ~$0.71 / 1M Tokens | ~95% Cheaper |

| Sentiment Analysis | ~$14.00 / 1M Tokens | ~$0.20 / 1M Tokens | ~99% Cheaper |

| Entity Extraction | ~$14.00 / 1M Tokens | ~$0.60 / 1M Tokens | ~96% Cheaper |

| Complex Code Gen | ~$14.00 / 1M Tokens | (Top Tier Necessary) | N/A |

For tasks like Sentiment Analysis or simple Classification, using a flagship model is burning capital with zero added value. The “Optimised” model is not just cheaper; for these specific tasks, it is functionally indistinguishable from the flagship.

This is where PromptLeash can help, understand what uses cases can be done with reduced cost models and what can be optimised.

The Challenge of Manual Routing

If the math is so obvious, why isn’t every DevOps team doing this?

Because it is hard to maintain.

To implement model arbitrage manually, you have to build complex logic chains into your application layer. You end up with a spaghetti code of if/then statements:

- If prompt length < 50 tokens, use Haiku.

- If topic contains “legal”, use GPT-5.

This creates a maintenance nightmare. New models are released weekly. Pricing changes monthly. If you hardcode your routing logic, your engineering team spends half their time updating API keys and refactoring model calls rather than building features. It is technical debt waiting to happen.

Intelligent Model Costing with PromptLeash

This is where PromptLeash changes the equation. We understand everything that you are using models for, and where you can optimise them.

Real-World Example: The Customer Support Triage Bot

Consider a standard customer support agent.

Without Leash (The “Lazy” Way):

Every single message—from “Hi” to “My server is on fire”—goes to GPT-5.2.

- Result: High Cost, Moderate Latency.

After PromptLeash assessment and optimisation:

- Query A:“How do I reset my password?”

- Best Route: Llama-3-8B or Claude Haiku.

- Cost: Negligible.

- Latency: Instant (<200ms).

- Query B:“My Kubernetes deployment is failing with a CrashLoopBackOff error on node 3.”

- Best Route: GPT-5.2 or Claude 4.5 Sonnet.

- Cost: Higher (but justified).

- Latency: Standard.

The Result: Your Blended Cost (the average cost per interaction) drops by 40-60%, while your users actually perceive the bot as faster because the simple queries are answered instantly.

Stop Paying PhD Prices for Simple Math

Efficiency isn’t about using the cheapest tool; it’s about matching the tool to the job. You wouldn’t use a sledgehammer to hang a picture frame, and you shouldn’t use a reasoning model to summarize a 5-line email.

The companies that win in the next phase of AI adoption won’t be the ones with the most powerful models; they will be the ones with the most efficient operations.

Stop overpaying for simple tasks. Schedule a demo with PromptLeash today and see how we can cut your AI bill by 40% instantly without touching your code.