In the current landscape of Large Language Model (LLM) development, we are witnessing a frenetic “feature race” centered on one specific metric: context window size.

A year ago, 32k tokens felt revolutionary. Today, providers like OpenAI, Google, and Anthropic are normalizing windows of 128k, 200k, and even 1 million tokens. For AI engineers and architects, this sounds like a dream scenario. The days of complex chunking strategies and agonizing over what data to exclude from a prompt seem to be ending. Why worry about retrieval precision when you can just dump your entire database, three relevant PDFs, and the last six months of conversation history directly into the prompt?

It feels like unlimited RAM. But it isn’t. It’s a massive pricing trap.

While impressive, these enormous context windows have introduced the single biggest driver of invisible waste in enterprise AI spending: AI token costs derived from irrelevant context.

The critical misunderstanding lies in how LLM pricing models work. You do not pay for context once, like loading data into memory. You pay for every single token in your input prompt, every single time you send a request to the model. Treating an active context window like a passive hard drive is a fundamental architectural error that is inflating prompt engineering costs by orders of magnitude.

This post is a technical deep-dive into the economics of oversized context windows, the performance degradation they cause, and how to implement rigorous context window optimization.

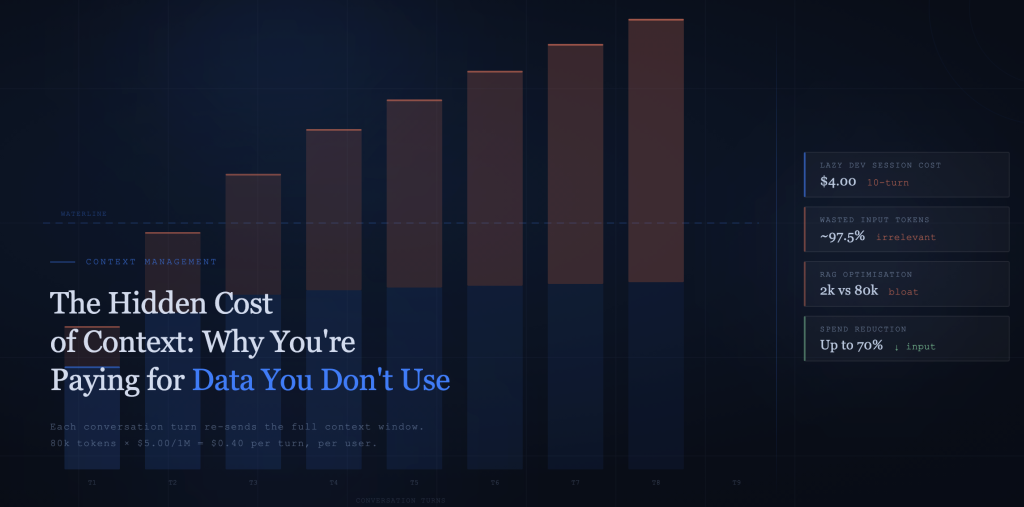

The Math of the “Lazy Developer Tax”

Let’s move from abstract concepts to concrete unit economics. The most common source of context bloat is poorly optimized Retrieval-Augmented Generation (RAG) systems.

The Scenario

Imagine you are building an internal AI assistant designed to answer employee questions based on a repository of corporate policy PDFs.

A user asks: “What is the reimbursement policy for international travel meals?”

The “Lazy” Approach (Unoptimised Retrieval)

The developer sets up a basic RAG pipeline. The retriever identifies five policy documents that seem relevant based on keyword matching. Instead of extracting the specific paragraphs related to meal reimbursements, the system takes the “easy” route: it retrieves all five documents in their entirety and stuffs them into the system prompt to ensure the answer is somewhere in the context.

Let’s assume these five PDFs total roughly 80,000 tokens.

The Cost Calculation

Let’s look at the math using current pricing for a high-end reasoning model like GPT-4o (approximately $5.00 per million input tokens).

- Input Context: 80,000 tokens

- Price per Token: $0.000005

- Cost for ONE Turn: 80,000 * $0.000005 = **$0.40**

Forty cents might not sound like a disaster. But that is the cost just to load the context before the model has generated a single word of output.

The disaster happens in the follow-up.

The user then asks: “Does that include alcohol?”

Because LLMs are stateless, to answer this follow-up question while maintaining conversational continuity, you must send the entire 80,000-token context again, plus the first Q&A pair.

If that user engages in a 10-turn conversation session to clarify the policy, you are not paying $0.40. You are paying $0.40 x 10.

That single user session just cost $4.00 in pure input costs, mostly to repeatedly process 79,500 tokens of irrelevant data about domestic travel, IT security, and HR complaints just to answer two specific questions about international meals.

Multiply this “lazy developer tax” across thousands of users and millions of interactions per month, and you have the recipe for an astronomical, indefensible AI bill.

Why Oversized Context Hurts Performance Too

If cost were the only downside, some organizations might eat the bill in exchange for simpler engineering. However, oversized context windows also actively degrade the technical performance of your AI applications.

More data does not always equal better answers.

1. The “Lost in the Middle” Phenomenon

Recent research into LLM attention mechanisms has documented a persistent issue known as the “Lost in the Middle” phenomenon.

While models are excellent at retrieving information placed at the very beginning (primacy bias) or very end (recency bias) of a long context window, their retrieval accuracy drops significantly when relevant facts are buried in the middle of a massive block of text.

By stuffing 100k tokens of noise into your prompt, you aren’t just increasing costs; you are statistically decreasing the probability that the model will correctly identify and use the specific needle found in that haystack. You are paying more for worse accuracy.

2. Latency and Time-To-First-Token (TTFT)

We often forget that input tokens must be processed before output tokens can be generated. The model’s attention mechanism must attend to every single token you provide.

Processing an 80k or 128k token prompt is compute-intensive. Even on robust infrastructure, this introduces significant latency, increasing the Time-To-First-Token (TTFT). A user asking a simple question may end up staring at a loading spinner for 10-15 seconds while the model “reads” a small novel before it begins typing. In many production use cases, this latency is unacceptable.

Technical Strategies for Manual Context Optimisation

Effective context management is no longer optional; it is a required discipline for viable production AI. If you are managing this manually right now, here are two architectural patterns to reduce bloat.

H3: 1. Better RAG (Precision Retrieval over Volume)

The root cause of the $4.00 session described above is a failure of retrieval. The goal of RAG is not to retrieve documents; it is to retrieve knowledge.

- Stop chunking by document boundaries. Your unit of retrieval should never be “an entire PDF.”

- Implement semantic chunking. Break down your source data into meaningful, atomic ideas (paragraphs or subsections).

- Strict Top-K. When a query comes in, retrieve only the top 3 to 5 most semantically relevant chunks.

A well-architected RAG system might retrieve 2,000 tokens of highly relevant context instead of 80,000 tokens of noisy documents. This alone reduces input costs by 97.5% while likely improving answer quality by reducing distracting noise.

H3: 2. The Summarisation Chain Pattern

Sometimes, you do need information from a large document, but you don’t need the verbatim text. In these cases, use a two-step “chain” architecture.

- Step 1 (Compression): Pass the large retrieved documents through a faster, cheaper model (e.g., Claude 3 Haiku, Llama 3 8B) with instructions to “summarize the key facts regarding [User Query] from this text.”

- Step 2 (Reasoning): Take the concise summary generated in Step 1 and feed that into your expensive, high-reasoning model (e.g., GPT-4) along with the user question.

You pay a small amount for the cheap compression step to save a massive amount on the expensive reasoning step.

How PromptLeash Automates Context Management

While manual strategies like better chunking and summarisation chains are effective, implementing them requires building complex orchestration pipelines, managing multiple model endpoints, and constantly tuning retrieval parameters. It’s heavy technical overhead.

PromptLeash was built to serve as the infrastructure layer that automates context window optimisation without requiring constant code changes. We treat context as a scarce resource that must be actively managed.

Here is how the PromptLeash platform tackles the problem:

1. Intelligent Context Trimming

PromptLeash sits between your application and the LLM provider. Before a prompt is sent to the model, our analysis engine evaluates the input context against the current user query. It identifies and trims highly irrelevant text segments that are unlikely to contribute to the answer, dynamically reducing payload size without sacrificing necessary information.

2. Automated Session Memory Management

Managing conversational history in multi-turn chats is one of the fastest ways to blow up a context window. The naive approach is to simply append every new Q&A pair to the history.

PromptLeash automates this using smart “rolling windows” and background summarisation. As a conversation progresses, older turns aren’t just dropped; they are periodically summarized into concise “memory beads” that retain the core context of the conversation without the verbatim token bloat. This keeps the active context window small and relevant, even in long conversations.

3. Granular Cost Visibility

You cannot optimize what you cannot measure. Standard API billing gives you a lump sum for token usage. The PromptLeash dashboard provides granular visibility, showing developers exactly the breakdown between Input Token Cost (context load) and Output Token Cost (generation).

Seeing in real-time that 90% of your spend is going toward repetitive input context is often the necessary catalyst for engineering teams to prioritize optimization efforts.

Conclusion

The massive context windows offered by modern LLMs are a powerful tool, but they are not free storage spaces. They are expensive, active processing memory.

Treating them as dump trucks for uncurated data is bad engineering and worse economics. Every irrelevant token you send is a micro-tax on your budget and a drag on your performance.

By shifting from a mindset of “maximum context” to “minimum necessary context,” you can dramatically improve the unit economics of your AI features.

Are your context windows bloated with expensive noise?

Stop paying the “lazy developer tax.” Try PromptLeash’s context optimization tools today. See how automated trimming and smart memory management can reduce your input token spend by up to 70% while improving model latency and accuracy.